The Genius Statue Problem

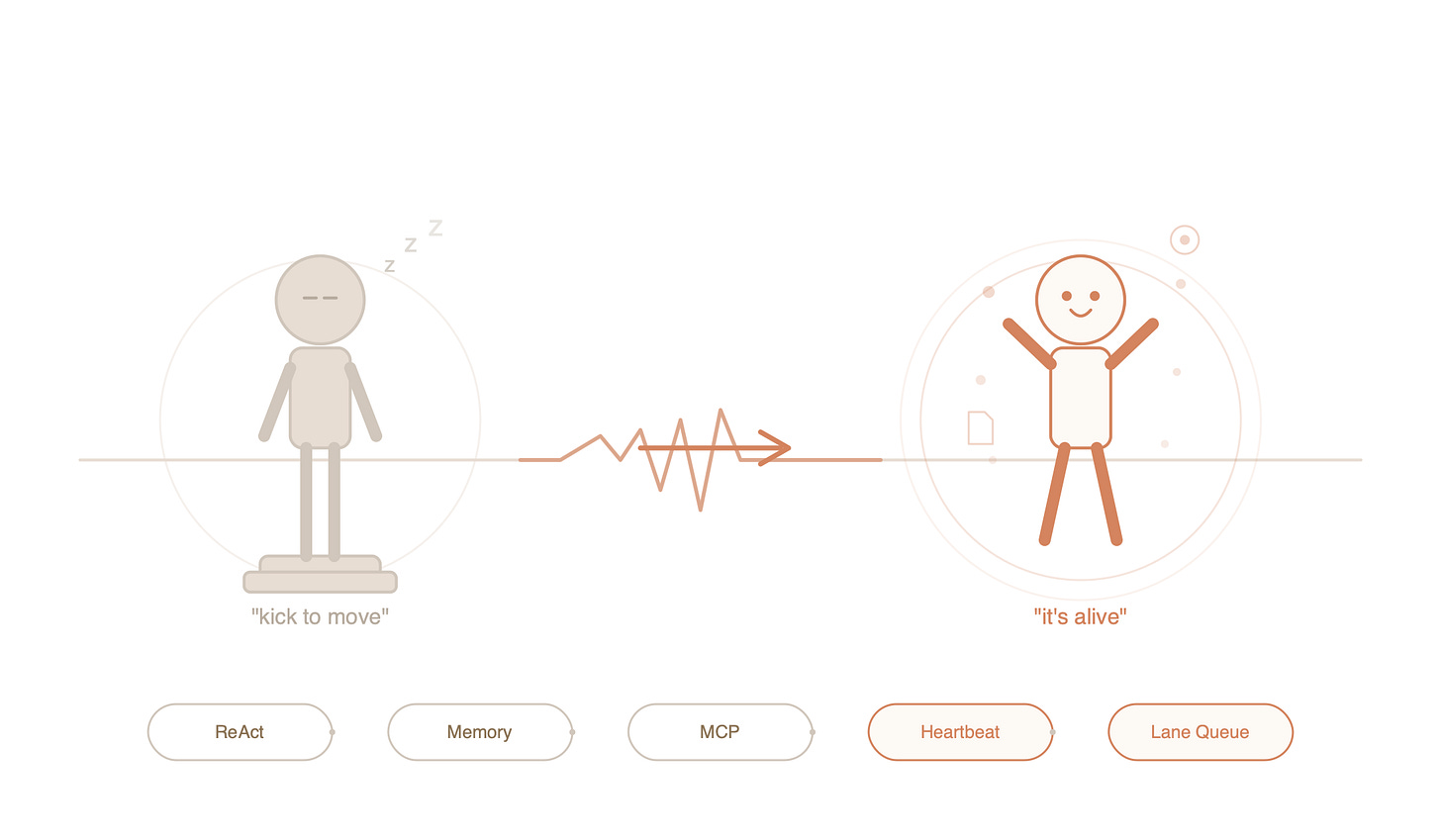

Picture the smartest person you’ve ever met. Now imagine they will only speak when spoken to—and I mean literally. You could seat them in a burning building and they’d sit there, perfectly calm, watching the ceiling melt, waiting for someone to ask, “Hey, is something wrong?”

That’s how large language models have worked since the beginning. No prompt, no action. They’re reactive in the most extreme sense of the word—less like a sleeping dog that might wake up if it smells smoke, and more like a toaster that will never, under any circumstances, make toast until you push the lever down.

This isn’t a flaw in the AI’s intelligence. It’s a flaw in its architecture. The model itself might be brilliant, but it lives inside a system that says: “You exist only when called upon.” Between calls, it doesn’t sleep. It doesn’t wait. It simply isn’t.

OpenClaw changed this. While you’re asleep at 3 AM, it can wake up, notice a failing test, and fix it. Not because it gained consciousness—but because someone finally engineered the plumbing to let a brilliant mind run on its own schedule.

To understand how, we need to trace three ideas that made it possible.

Part 1: The Ideas (What Makes It Tick)

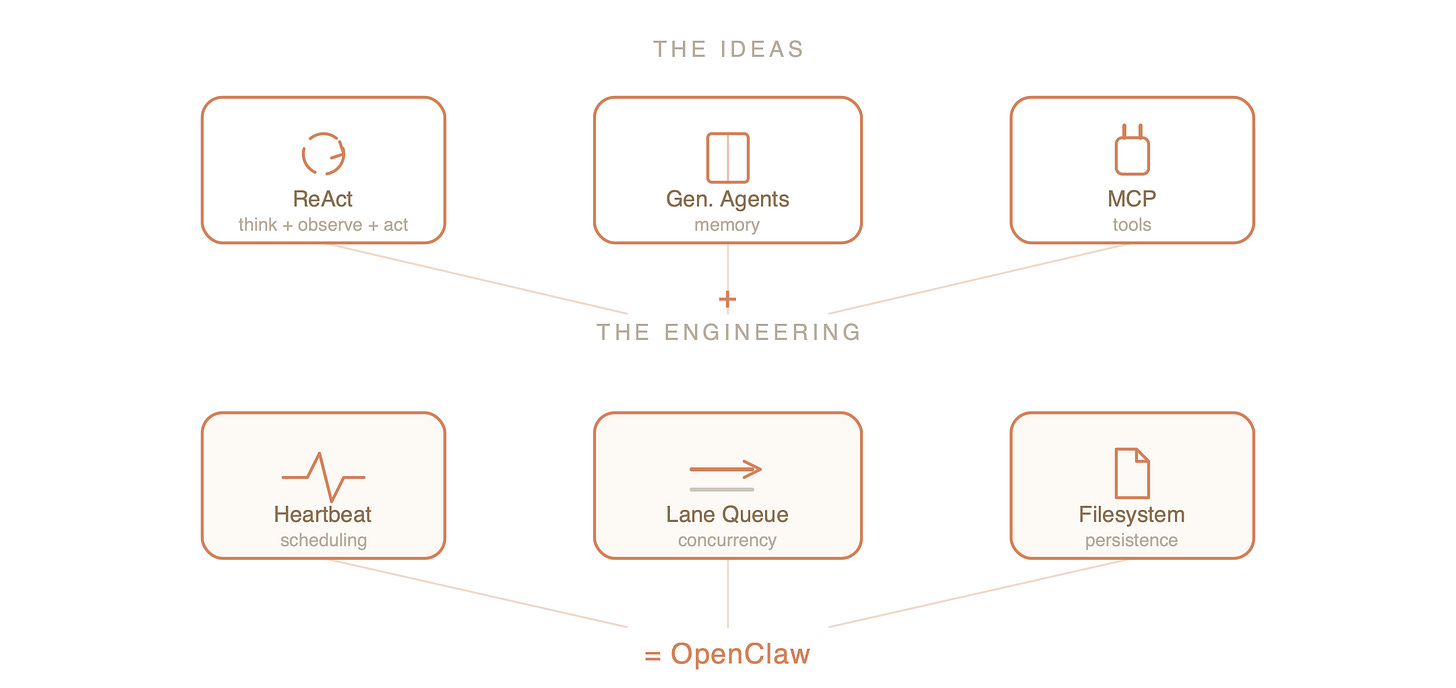

OpenClaw’s autonomy rests on three research breakthroughs. Each one solved a specific, fundamental limitation. Together, they form something greater than the sum of their parts.

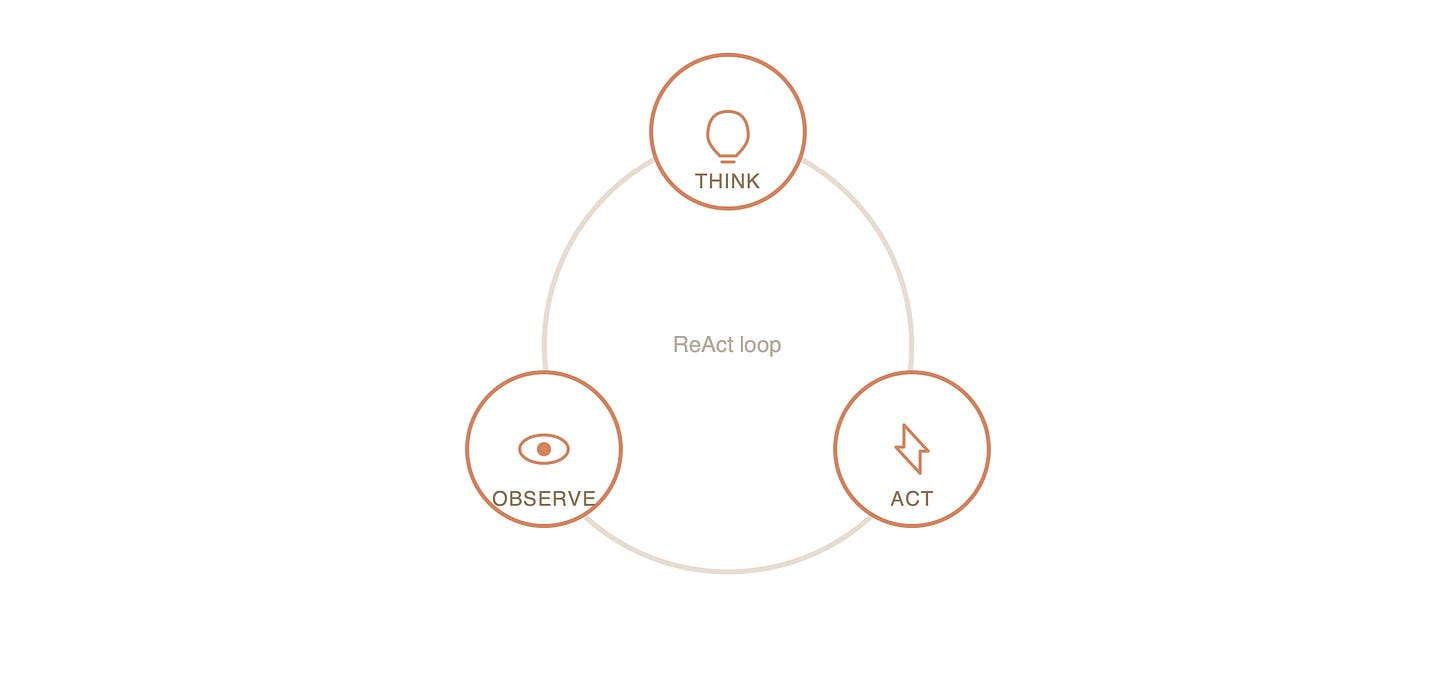

1. Thinking Out Loud: ReAct

Here’s something interesting about how you solve problems. You don’t just think in a straight line from question to answer. You think a little, do something, observe what happened, think some more, do something else. It’s a loop.

Early AI couldn’t do this. It had two separate modes that never talked to each other:

Reasoning mode: “I think the answer is 42.” (Great at thinking. Terrible at checking if 42 is actually right.)

Action mode: “I called the API and got back data.” (Great at doing things. No idea why it was doing them.)

The ReAct paper had a deceptively simple insight: what if we let the AI do both at the same time? Think, then act, then observe, then think again—in a loop, just like humans do.

In OpenClaw, this plays out as a cycle:

Think: “The user wants weather data. I should check the API.”

Act: Calls the weather API.

Observe: “Response says 12°C and rain.”

Think: “I have what I need now.”

Answer: “Grab an umbrella—it’s 12 degrees and raining.”

This sounds obvious in hindsight, which is exactly what makes it a good idea. Without this loop, OpenClaw would be a very eloquent parrot. With it, the parrot can actually go fetch things.

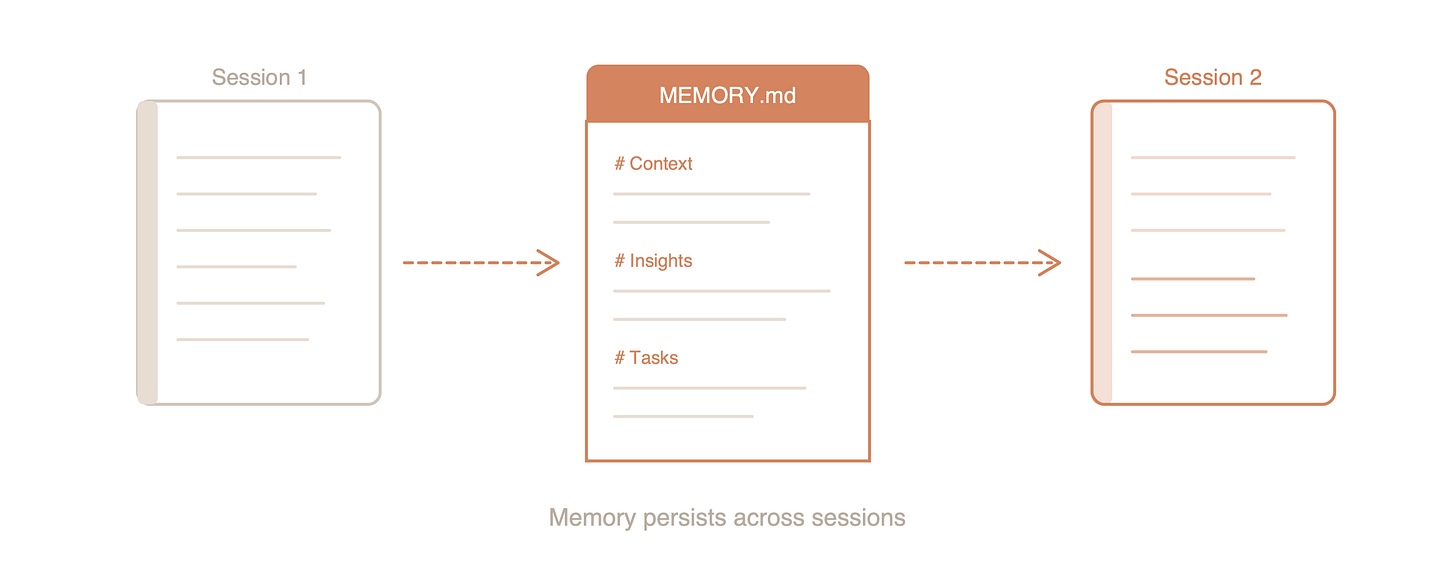

2. Giving It a Diary: Generative Agents

Even with ReAct, there was still a brutal problem: every conversation started from scratch. The AI had the memory span of a goldfish with amnesia. You could spend an hour explaining your entire project architecture, close the chat, reopen it, and be greeted with the cognitive equivalent of “New phone, who dis?”

Stanford’s famous “virtual town” experiment tackled this head-on. Researchers created a tiny simulated village populated by AI agents, each with a memory stream—a running log of everything they experienced. Crucially, the agents could also reflect on their memories, compressing raw experiences into higher-level insights.

The results were startling. Agents started behaving with continuity. They remembered grudges. They planned surprise parties. They formed opinions that evolved over time. Not because anyone programmed “hold grudges” or “plan parties,” but because memory plus reflection produces something that looks remarkably like a personality.

OpenClaw borrowed this idea wholesale. Its memory lives in simple files—MEMORY.md, HEARTBEAT.md—that persist between sessions. Kill the process, restart your computer, come back a week later. OpenClaw reads its diary and picks up exactly where it left off: “Right, I was in the middle of refactoring that authentication module.”

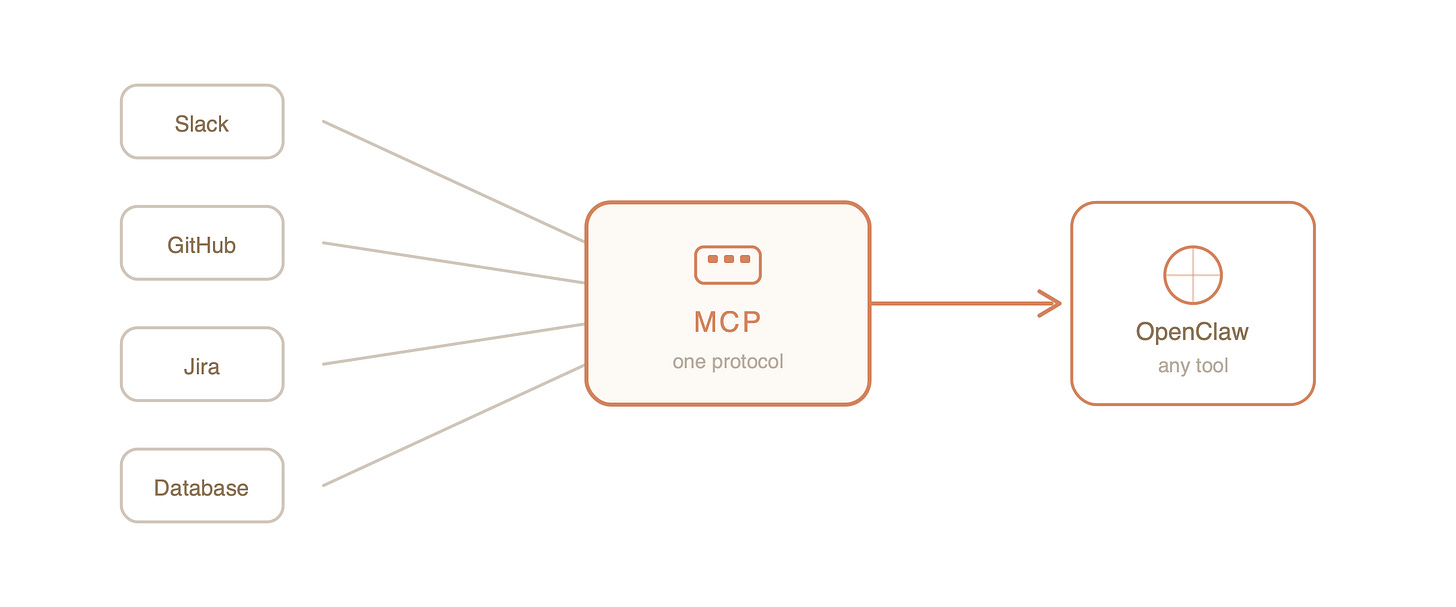

3. The Universal Adapter: MCP

Now we had an AI that could think-act-observe in loops and remember what it did yesterday. But there was one more wall: every new tool required custom integration code.

Want OpenClaw to talk to Slack? Write a Slack integration. GitHub? Write a GitHub integration. Your company’s internal ticketing system? Another integration. Your smart coffee maker? You get the idea. Each new connection was bespoke engineering, and the whole thing scaled about as well as hand-knitting a fishing net.

MCP—the Model Context Protocol—solved this the same way USB solved the peripheral problem in the 1990s. Before USB, every device needed its own special port. Printers had printer ports, keyboards had keyboard ports, and connecting anything new to your computer was an exercise in cable archaeology.

MCP is the USB port for AI tools. OpenClaw doesn’t need to know the internal details of every service it connects to. It just needs the service to speak MCP. Plug in a new tool, and OpenClaw can use it immediately—no custom code, no special handling.

This is the difference between a hammer and a hand. A hammer does one thing. A hand can pick up any tool.

Part 2: The Engineering (Making It Actually Work)

Here’s where things get real. If you naively stitched those three papers together into a script, you wouldn’t get a helpful assistant. You’d get an expensive catastrophe—an AI that runs in infinite loops, burns through API credits like jet fuel, and occasionally tries to deploy your grocery list to production.

OpenClaw works because of three pragmatic engineering decisions that keep the theory from going haywire.

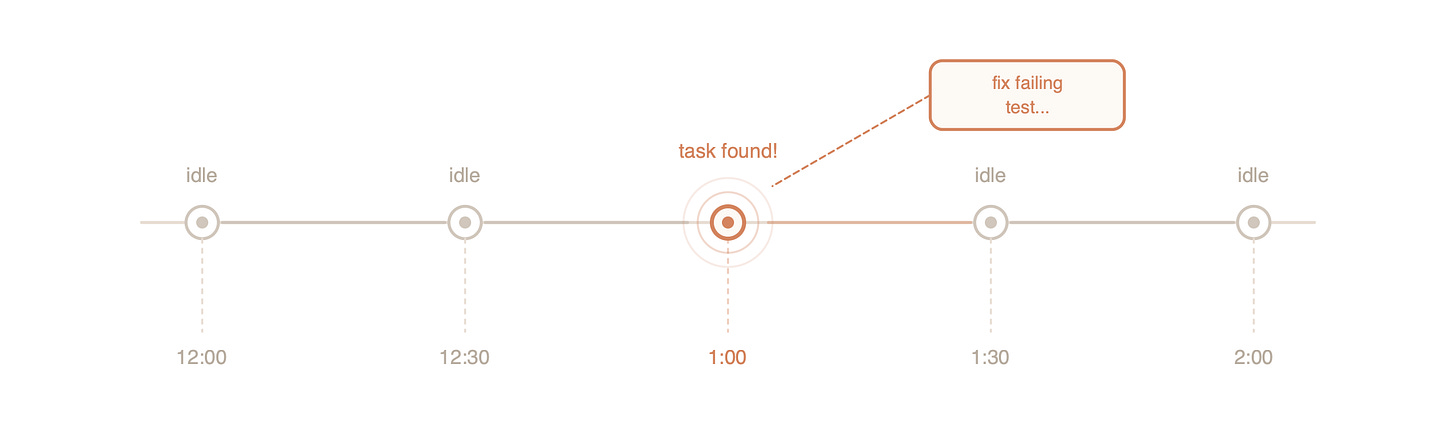

The Heartbeat: A Cron Job Pretending to Be a Pulse

The phrase “always-on AI” conjures images of a digital brain humming away in perpetual thought. The reality is far more mundane—and far more clever.

OpenClaw’s “consciousness” is a scheduled timer. Every 30 minutes (by default), an alarm goes off. OpenClaw wakes up and runs through a simple checklist:

Wake up. The scheduler pings it.

Check the to-do list. Read

HEARTBEAT.md.Decide:

Nothing to do? → Go back to sleep. (This is important—idle heartbeats cost almost nothing.)

Tasks waiting? → Start working.

Something broken? → Alert the human.

That’s it. The “illusion of life” is a cron job with good judgment about when to act and when to stay quiet. It’s the engineering equivalent of a night security guard who checks the building every 30 minutes—not because they’re always patrolling, but because they have a schedule.

The beauty is in what doesn’t happen. Most heartbeats find an empty task list and cost a fraction of a cent. The AI only burns real compute when there’s real work to do.

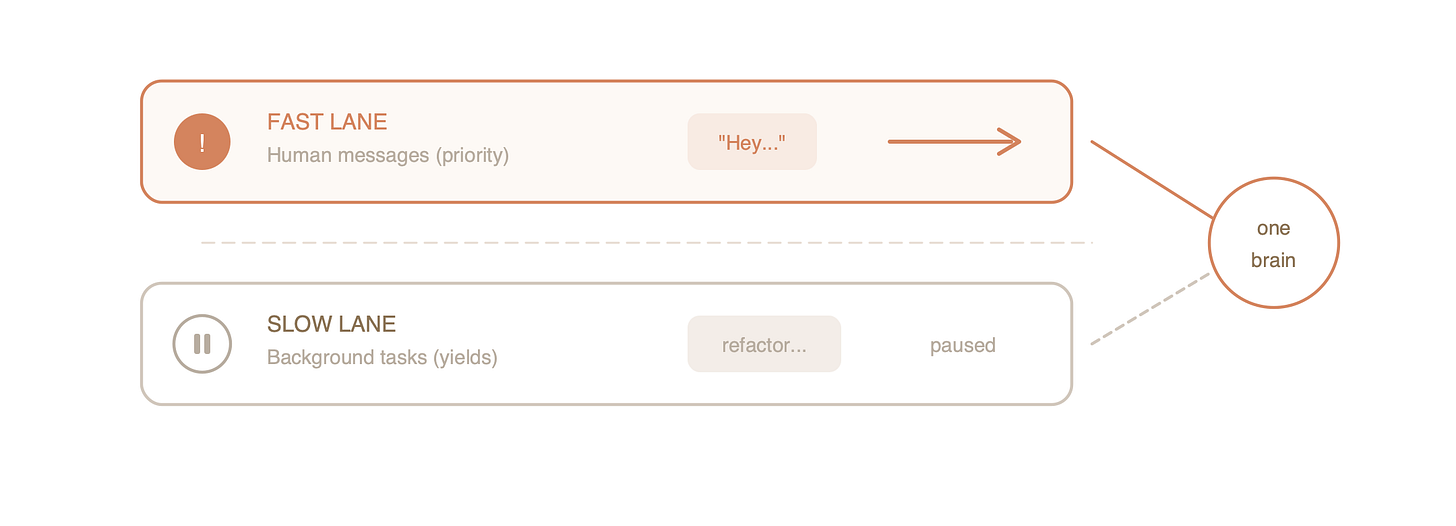

Lane-Aware Queuing: Traffic Lights for Thought

Here’s a problem you might not think about until it bites you: what happens when OpenClaw is deep in a background task—say, refactoring a module—and you suddenly send it a message?

Without safeguards, you’d get a collision. The AI has one “brain” (one LLM context), and asking it to simultaneously refactor code and answer your question is like asking someone to write an essay while holding a conversation. The essay gets fragments of the conversation mixed in, and the conversation gets fragments of the essay. Nobody wins.

OpenClaw handles this with a two-lane queue:

Fast Lane: Your messages. You’re the human; you always get priority.

Slow Lane: Background tasks from the heartbeat.

The rule is strict: if you’re talking to it, background work stops and waits. When you’re done, background work resumes. It’s not true multitasking—it’s disciplined time-sharing, the same principle that made early operating systems feel responsive even on single-core processors.

This means OpenClaw can be autonomous without being rude. It works in the background, but the moment you need it, it drops everything and pays attention.

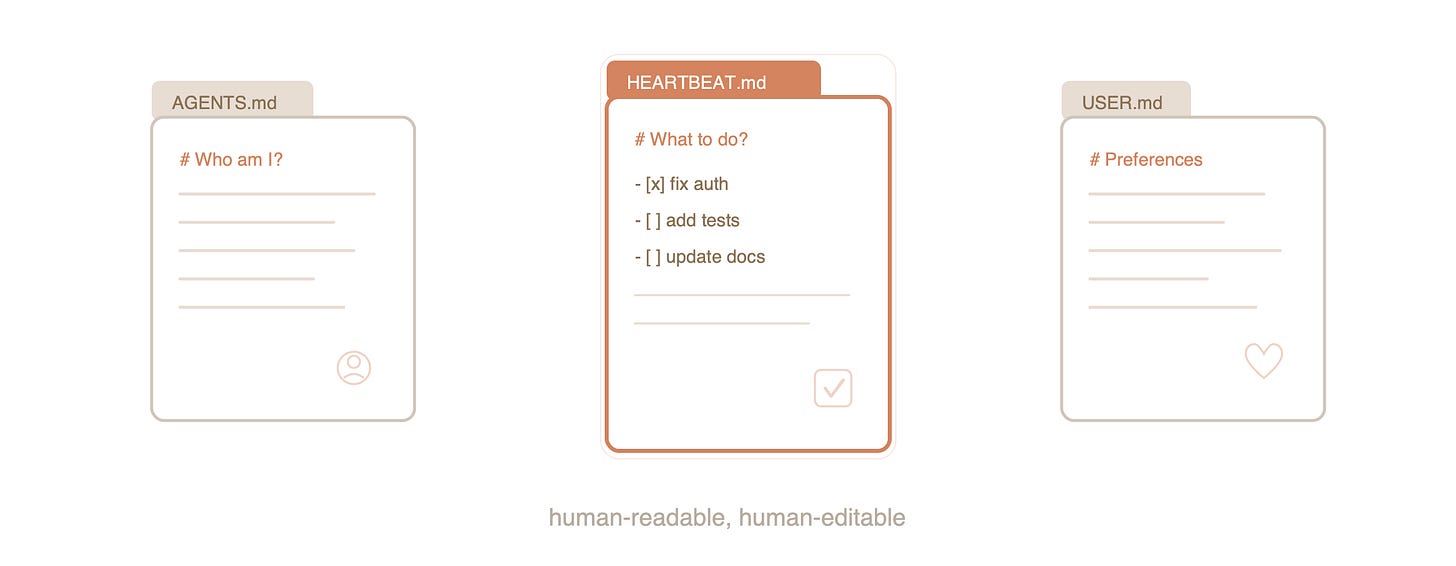

Filesystem as Memory: The Low-Tech Stroke of Genius

When most engineers hear “AI memory system,” they immediately think: vector databases, embedding models, similarity search, cloud infrastructure. The full modern stack.

OpenClaw uses text files.

Specifically, Markdown files sitting in a regular directory:

AGENTS.md→ “Who am I?” (Its role and persona.)HEARTBEAT.md→ “What should I be doing?” (Its task list.)USER.md→ “What does this person care about?” (Long-term preferences.)

This is aggressively simple, and that’s exactly why it works so well.

First, it’s fast. Reading a text file is the kind of operation computers have been optimized for since the 1970s. No network calls, no query parsing, no cold starts.

Second, it’s debuggable. When something goes wrong—and things always go wrong—you can open the file in any text editor and see exactly what the AI “remembers.” Try doing that with a vector database. You’ll be writing custom query scripts just to find out what went sideways.

Third—and this is the part that’s genuinely elegant—it’s human-editable. You can open HEARTBEAT.md, cross out a task, add a new one, and save. The next time OpenClaw wakes up, it sees your changes. You’re editing the AI’s memory with a text editor. There’s something deeply satisfying about that—like being able to leave a Post-it note on a coworker’s desk, except the coworker is an AI and the desk is a filesystem.

Conclusion: It’s Alive (With an Asterisk)

OpenClaw isn’t a single invention. It’s what happens when three good ideas meet three good engineering decisions:

ReAct: “How do I actually do things?”

Generative Agents: “How do I remember who I am?”

MCP: “How do I use any tool?”

Heartbeat: “When do I wake up?”

Lane Queuing: “How do I stay sane?”

Filesystem Memory: “Where do I keep my thoughts?”

None of these ideas are individually revolutionary. ReAct is a loop. Generative Agents is a diary. MCP is a protocol. The heartbeat is a cron job. The queue is basic concurrency control. The memory system is literally just files.

But assembled together, they cross a threshold that feels qualitatively different. The system exhibits initiative. It maintains continuity across sessions. It adapts to new tools without being rewritten. It manages its own resources. It knows when to work and when to wait.

Is it “alive”? Of course not. But it’s also no longer a statue waiting to be poked. It’s something new—a system that acts on its own schedule, remembers its purpose, and gets work done while you sleep.

So if you wake up tomorrow and find a commit you didn’t make, don’t panic. OpenClaw just checked its sticky note, realized it had a job to do, and did it. Then it went back to sleep—because even digital workers know not to waste electricity on an empty to-do list.

References