Morning, CEO!

Anthropic tripled their engineering team in one year.

Each engineer became 70% more productive.

The tool behind it? Claude Code.

Here’s the weird part: 80% of Claude Code is written by Claude Code itself.

Today we’re stealing Boris Cherny’s playbook. Not the “what” (you already know Claude Code won). The “how”—the forks in the road where they could have zigged but zagged instead.

And why those zigs would have killed them.

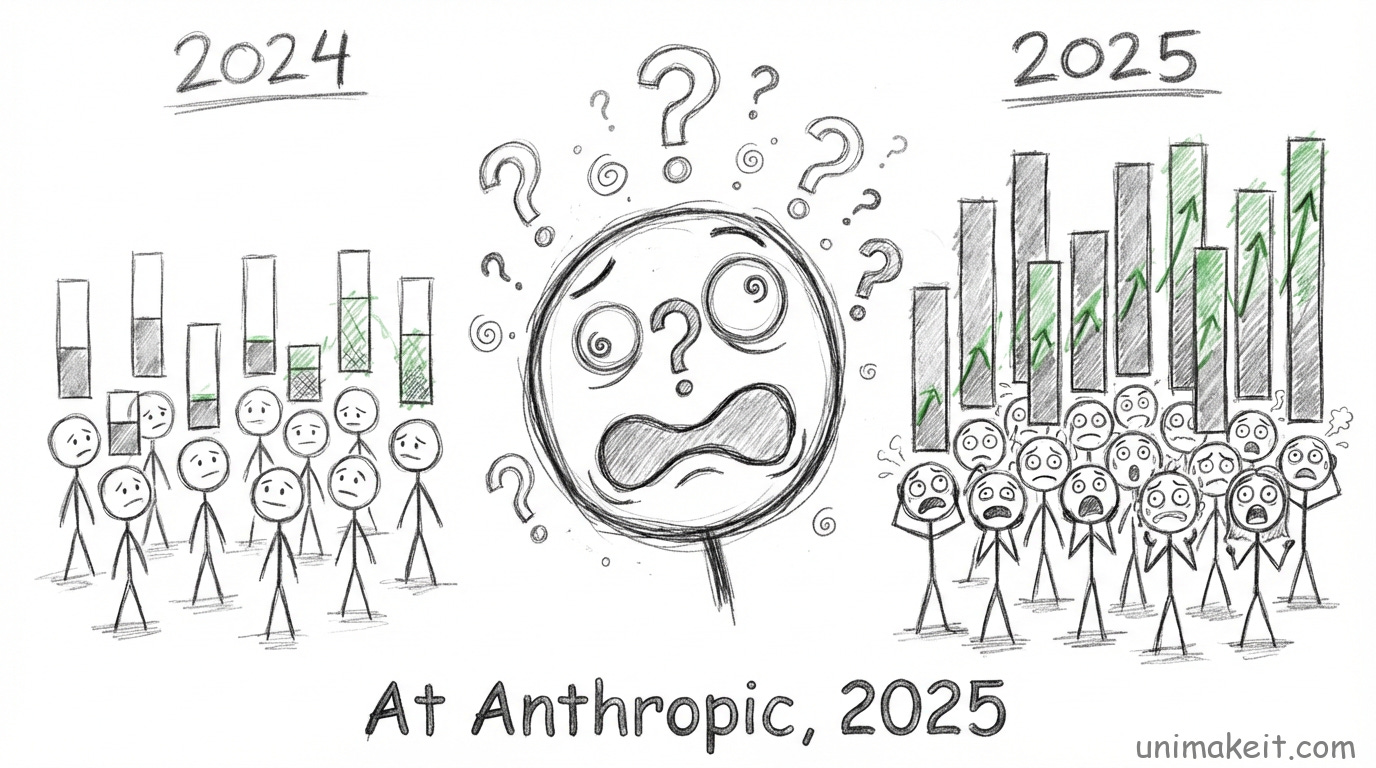

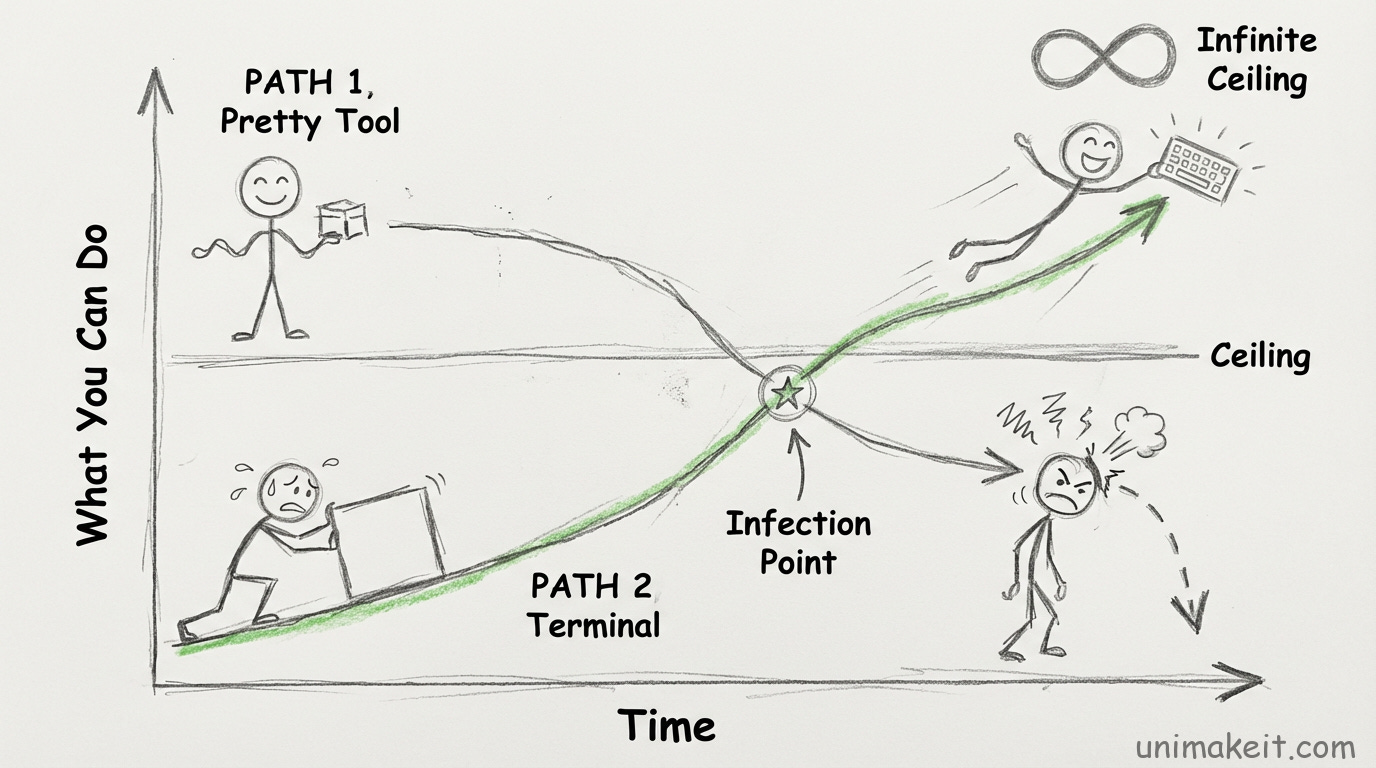

Crossroad #1: The Beautiful UI vs The Black Screen

Picture this:

It’s early 2024. Every AI coding tool is racing to build the prettiest interface.

Cursor has that sleek split-pane editor. Windsurf has beautiful diffs. Everyone’s designing for the Figma generation.

Then Boris builds... a command line tool.

A terminal.

Black screen. Green text. Zero GUI.

His manager at Anthropic, Ben Mann, could have said: “Nobody’s going to use this. Make it pretty.”

Instead he said: “Don’t build for today’s model. Build for the model 6 months from now.”

This is the fork in the road.

Option A: Build the polished IDE everyone expects.

Pro: Non-technical users can use it day one. Lower learning curve. Easier adoption.

Con: You have to build ALL the scaffolding. File trees. Syntax highlighting. Git integration. Settings panels. This takes months. And limits what the AI can do to what you’ve built UI for.

Option B: Give Claude raw terminal access and nothing else.

Pro: Claude can do literally anything you can do in a terminal. Zero limitations. Also, you ship in weeks not months.

Con: Terrifying for non-technical users. Sales team sees it and goes “...what is this?”

Boris chose Option B.

Here’s why it worked:

When you give Claude a terminal, you give it the same interface YOU use.

Anything you can do, Claude can do.

Need to check git history?

git logNeed to run tests?

npm testNeed to deploy?

./deploy.sh

No custom tools. No wrapper APIs. No “we need to build that feature.”

Just bash.

The tradeoff is brutal though.

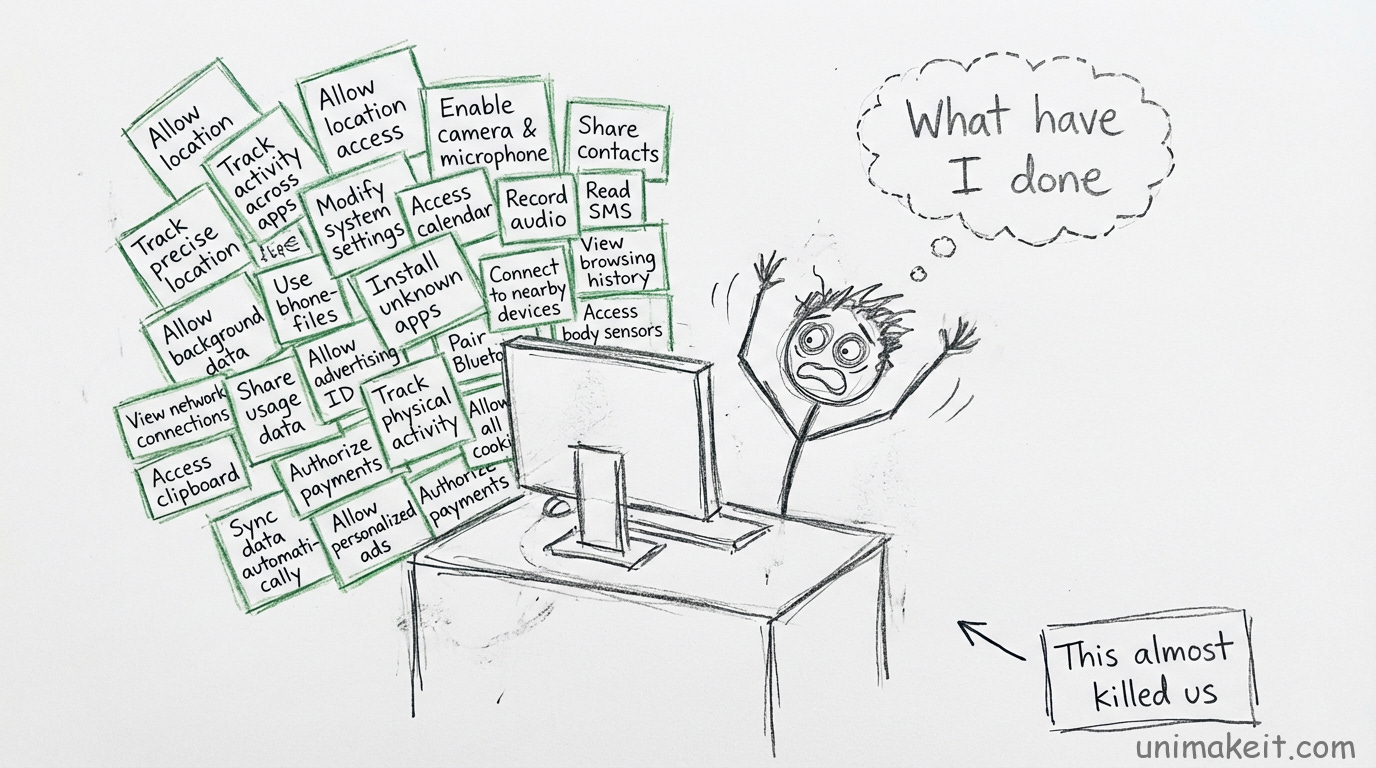

Cat (the PM) told a story about their marketing team member trying Claude Code. She got 30 permission pop-ups in a row because she’d never used a terminal before.

They almost lost her.

But here’s what I didn’t expect:

The terminal actually made it more flexible, not less.

Because non-technical people could now do technical things.

Brandon, their data scientist, had never used a terminal. Now he runs multiple Claude Code instances writing SQL.

Sales people use it to query Salesforce.

Someone used it to decide which sofa to buy.

Nobody expected this.

If they’d built a polished coding IDE, it would have stayed a coding tool.

The terminal made it more accessible long-term, not less.

This is backwards from how we usually think.

We think: Polish → Accessibility.

But actually: Flexibility → Accessibility (once users learn the basics).

The lesson for you:

When you’re building something (a workflow, a template, a system), you face this choice:

Make it pretty and constrained? Or make it ugly and flexible?

We default to pretty.

Pretty feels professional. Pretty feels done.

But pretty also means locked-in.

Every design choice you make is a constraint you’re imposing.

Sometimes the right move is to give people the raw tools and get out of the way.

Yes, the learning curve is steeper.

But the ceiling is way higher.

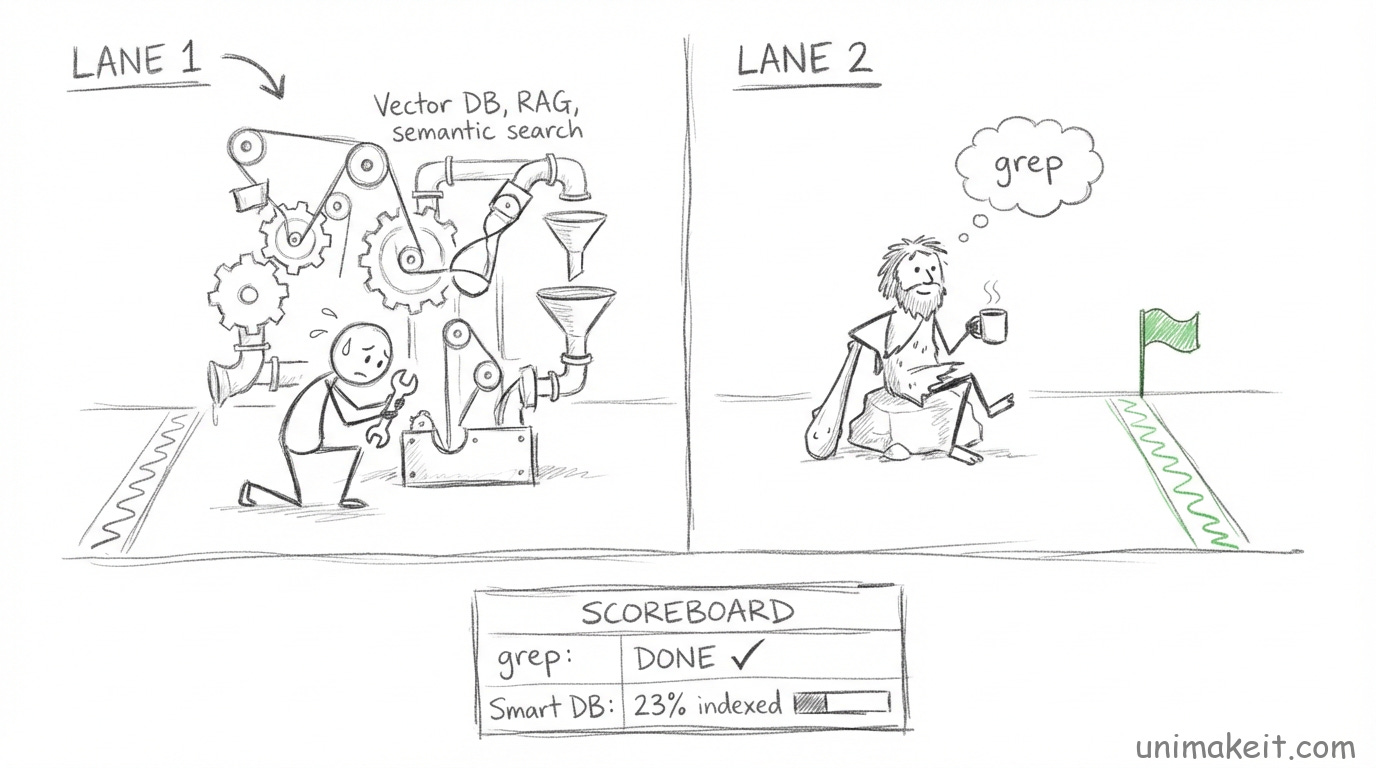

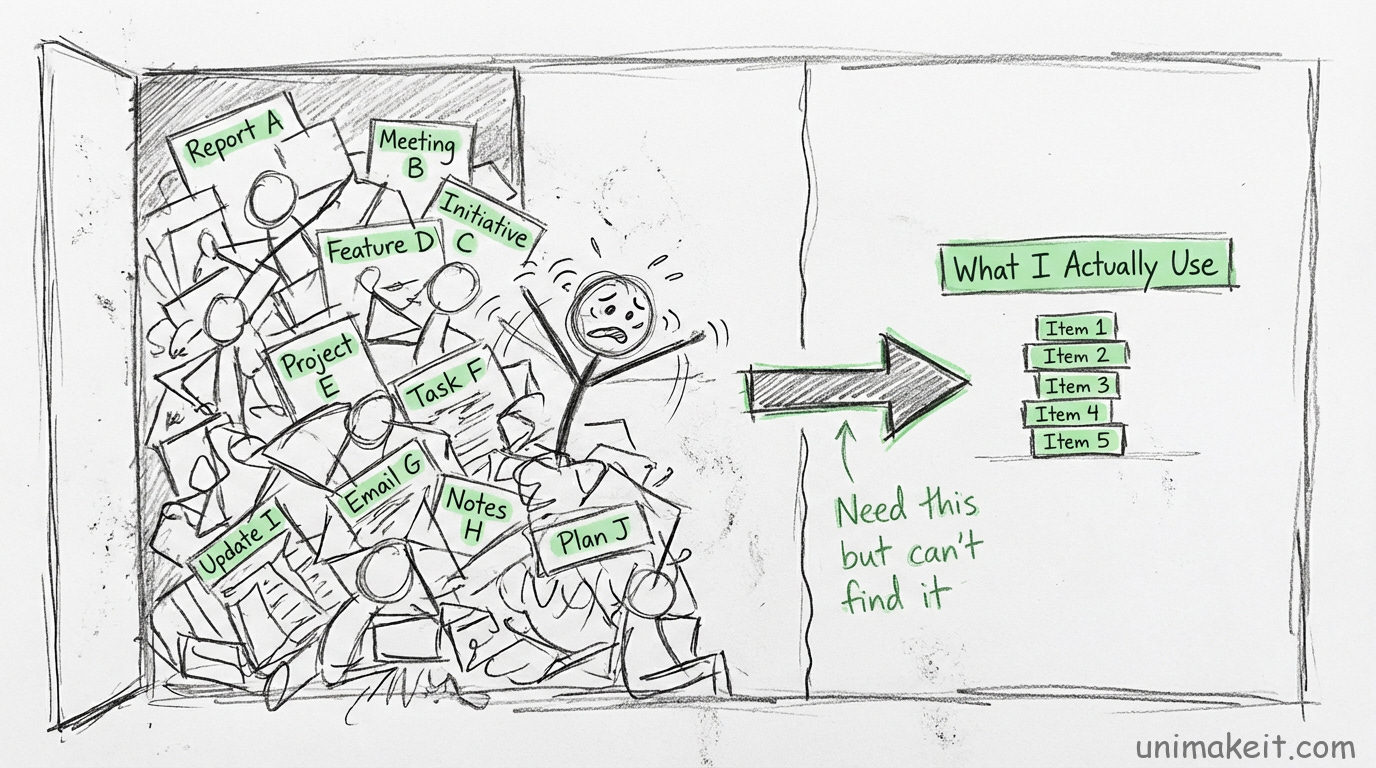

Crossroad #2: The Smart Database vs The Dumb Search

Early Claude Code had a problem:

How does Claude find relevant code in a massive codebase?

Every AI coding tool faces this. Your codebase is too big to fit in context.

The obvious answer: Use RAG (Retrieval Augmented Generation).

Index the codebase. Create vector embeddings. Build semantic search. Store it in a database.

This is what everyone does. This is the “smart” way.

Boris tried it. It worked... okay.

But there were problems:

Problem 1: The index goes stale. You change code. The index doesn’t update. Now Claude is working with old information.

Problem 2: Security. Your index has to live somewhere. A third-party provider? Now you’re uploading your codebase to someone else’s servers. Build it yourself? Now you’re maintaining database infrastructure.

Problem 3: Complexity. More moving parts. More things to break. More things to maintain.

So Boris hit a fork in the road:

Option A: Invest in making RAG really good. Build auto-indexing. Build security. Build infrastructure. Optimize retrieval. This is technically sophisticated. This is what a “real” company would do.

Option B: Delete all the RAG code and just let Claude search with grep and bash. This is caveman simple. This seems worse. Grep is brute force. It’s slow. It’s expensive (more tokens, more API calls).

Boris chose Option B.

He deleted the RAG system.

Now when Claude needs to find something, it just... searches. With regular code search. With grep. With find.

Agentic search.

Claude decides what to search for. Runs the search. Looks at results. Searches again. Iterates.

This seems insane, right?

You’re burning tokens and time on something a database could do instantly.

But here’s what happened:

It outperformed RAG by a lot.

Boris’s words: “It outperformed everything by a lot.”

Why?

Because the model is smart enough to know what to search for.

RAG is dumb retrieval. You ask for “authentication” and it gives you every file with that word.

Agentic search is smart exploration. Claude searches, evaluates, searches differently, narrows down.

It understands what it’s looking for.

Plus:

No index to maintain

No security issues (everything stays local)

No drift between code and index

Works immediately (no indexing step)

The tradeoff:

You’re trading tokens and latency for simplicity and security.

This trade makes no sense in 2020.

But in 2026? When model calls are cheap and getting cheaper?

When security is paramount?

When developer time is more expensive than compute?

Suddenly burning tokens to avoid infrastructure complexity is the smart move.

Here’s what this means for you:

You probably have some system that’s “optimized.”

Some spreadsheet with elaborate formulas. Some process with seven steps to avoid one manual check.

You built complexity to save effort.

But what if the effort you saved is now cheaper than the complexity you’re maintaining?

I have a friend who built an elaborate automation to avoid checking something twice a week.

The automation breaks constantly. She spends more time fixing it than she saved.

She won’t delete it because “I spent so much time building it.”

Sunk cost fallacy, optimized edition.

Sometimes the dumb solution is the smart solution.

Especially when the dumb solution is maintainable and the smart solution is brittle.

Boris’s rule: Simple today beats optimized tomorrow.

Because tomorrow the constraints will change anyway.

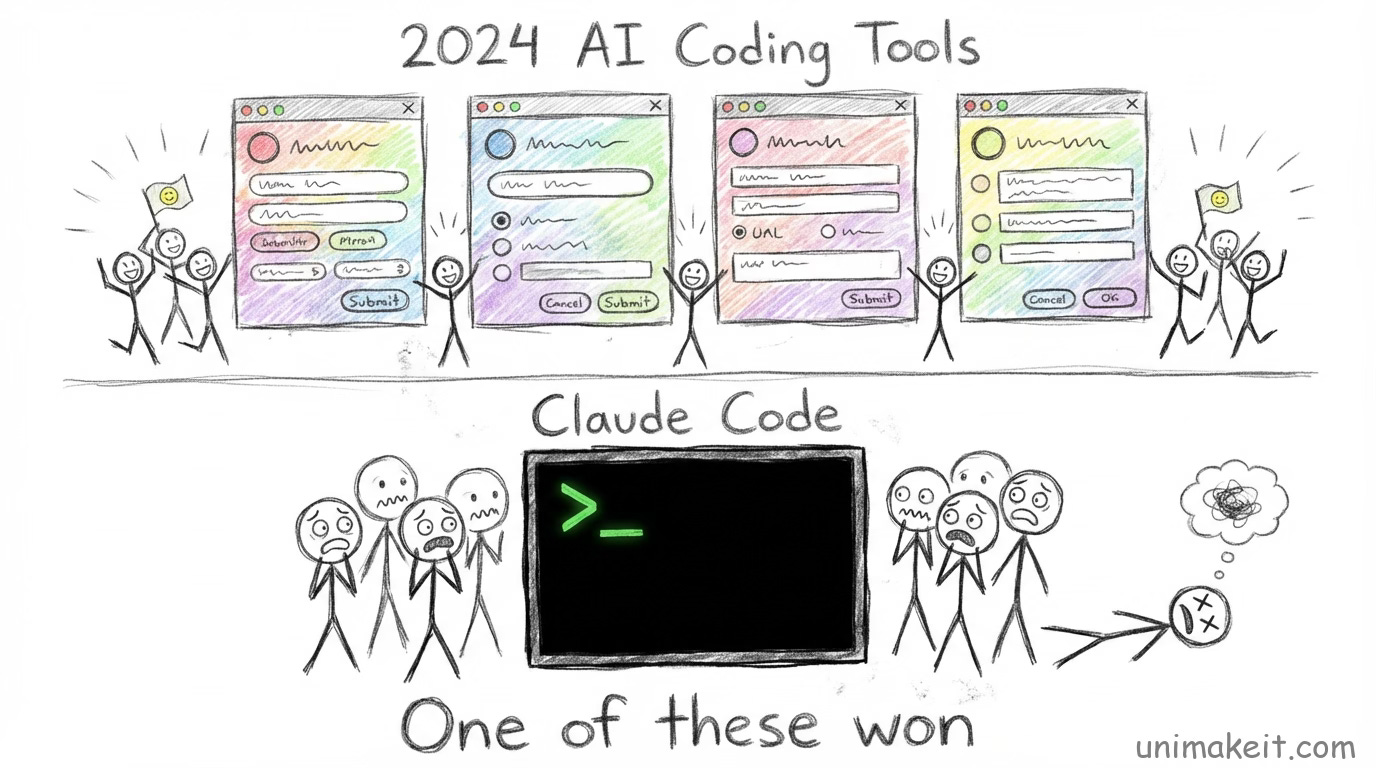

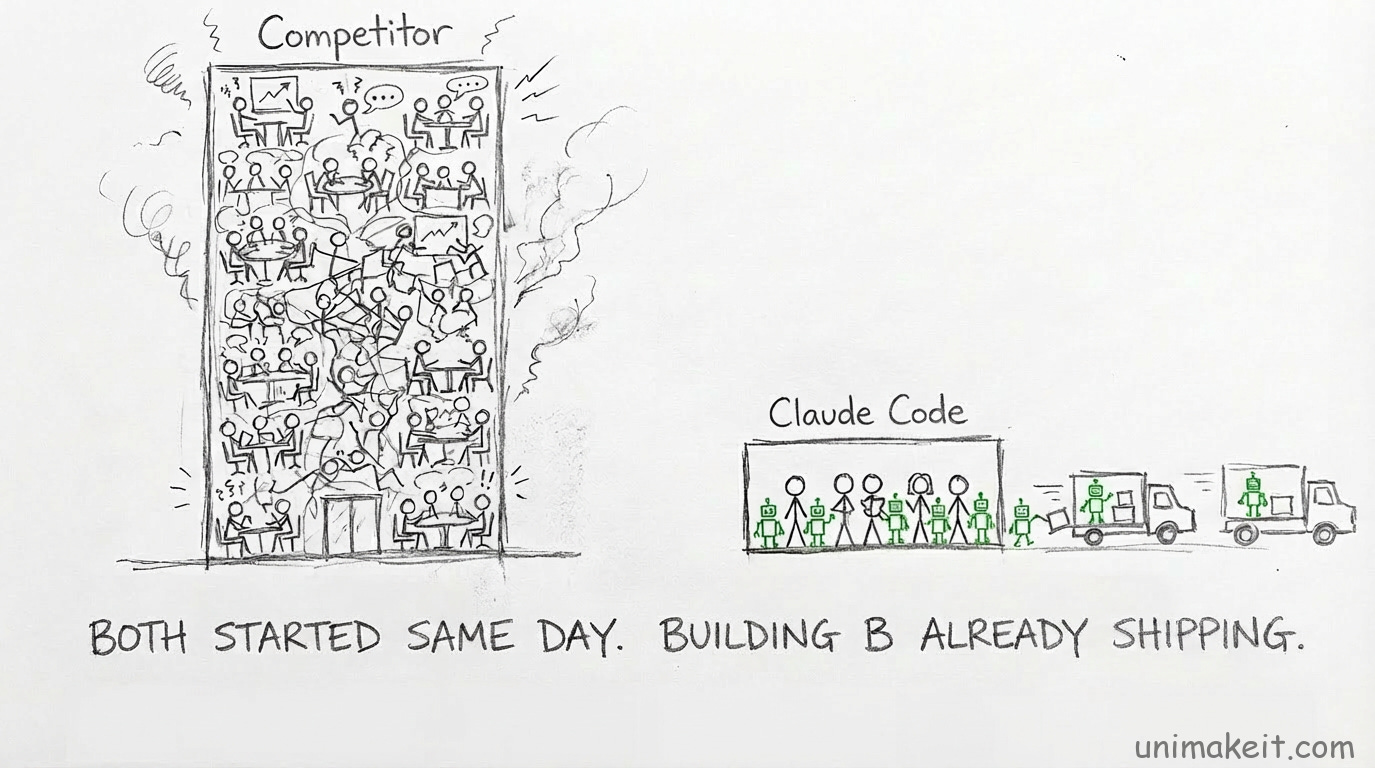

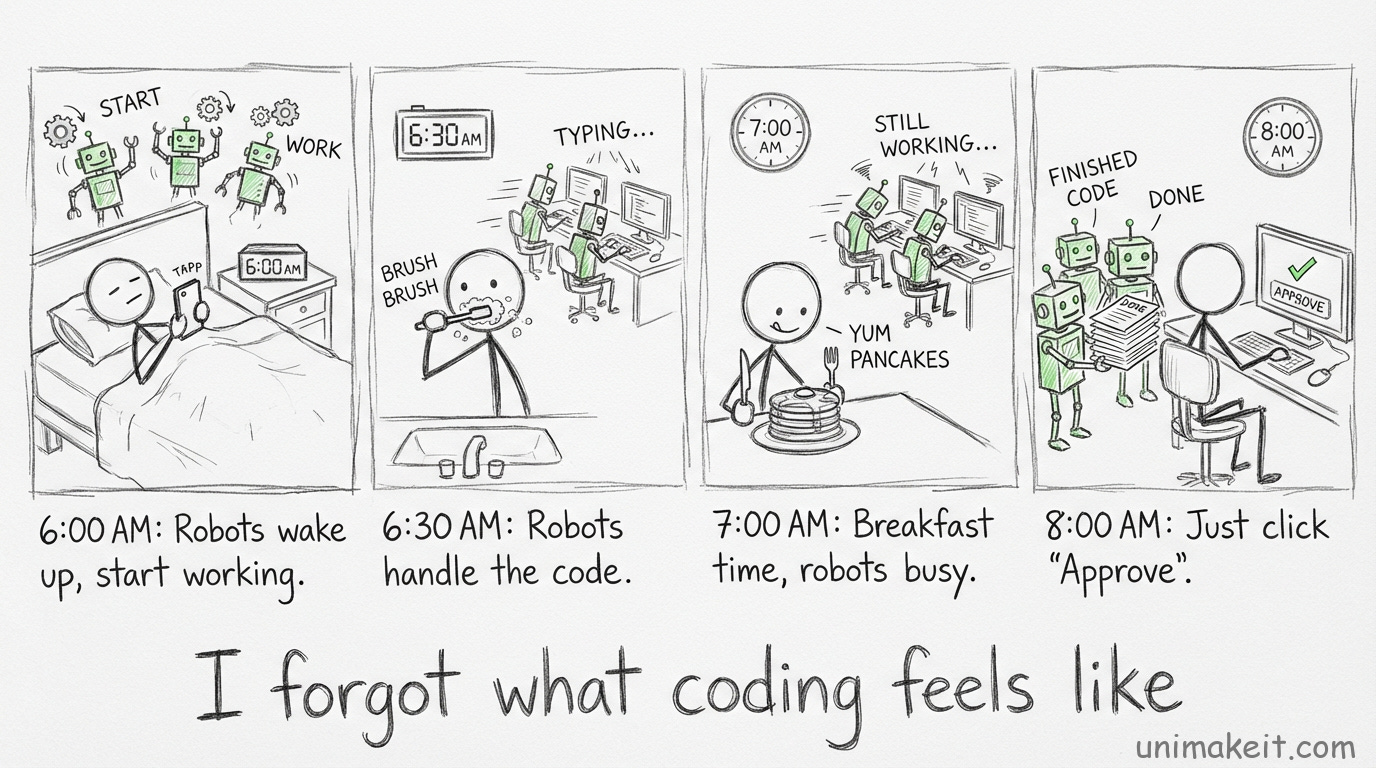

Crossroad #3: The Big Team vs The Tiny Team

Here’s a weird one:

Anthropic raised a ton of money. They’re scaling fast. They’re hiring aggressively.

Claude Code is their most-used internal tool. 70-80% daily active users among engineers.

Standard playbook: Throw 50 engineers at it. Scale it up. Build everything.

Instead?

They kept the team tiny.

Cat (the PM) explains their philosophy:

“At Anthropic, our product principle is: do the simple thing first. You staff things as little as you can and keep things as scrappy as you can because the constraints are actually pretty helpful.”

This is bonkers.

You have the money. You have the demand. You have executive buy-in.

Scale up!

But they didn’t.

Why?

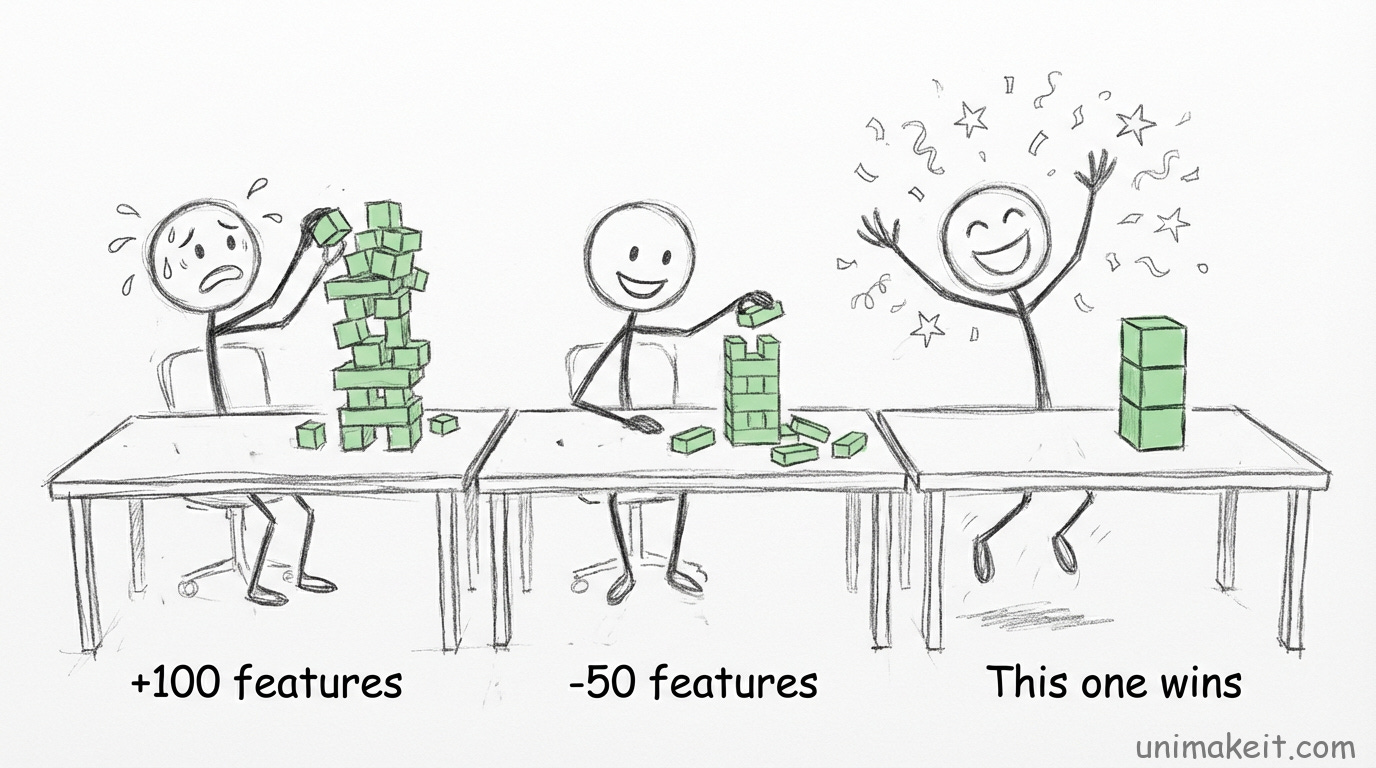

Fork in the road:

Option A: Staff up to 50+ engineers.

Pro: You can build everything. Every feature request. Every integration. Polish everything. Move fast on execution.

Con: More people = more coordination = more process = slower decisions. Also, more features = more complexity = more maintenance = more bugs.

Option B: Keep the team small.

Pro: Fast decisions. Everyone knows everything. No coordination overhead. Forces you to say no to most features.

Con: Slow execution. Can’t build everything. Some obvious features won’t ship. Users will complain.

They chose Option B.

The current team is still small. Under 10 people.

Boris said something wild: “Most of our features are just people building the thing they wish the product had.”

It’s not top-down roadmap planning. It’s engineers scratching their own itches.

Dixon wanted to get Slack notifications from Claude Code. Built hooks.

Daisy wanted a plugin marketplace. Built plugins.

Someone wanted Vim mode. It went viral.

But here’s the tradeoff:

By staying small, they can’t build everything.

Cat mentioned they’re still figuring out the subscription model. Still working on better pricing communication. Still building out enterprise features.

These are obvious things a bigger team would have shipped.

But staying small forces them to choose.

And choosing is how you maintain product clarity.

Big teams build everything. Small teams build the right things.

There’s another reason this works:

80% of Claude Code is written by Claude Code.

They don’t need 50 engineers.

They need 5 engineers managing 50 Claude instances.

Boris’s morning routine now:

Wake up

Open Claude Code mobile app

Start 3-5 agents working on different tasks

Go eat breakfast

Come back, review their work

He’s not writing code. He’s orchestrating agents.

Fiona, the team’s manager, hadn’t coded in 10 years. Now she ships PRs weekly.

When your engineers have 10x leverage from AI, you don’t need 10x engineers.

You need really good judgment about what to build.

And small teams have better judgment than large teams.

Always.

Here’s what this means for you:

When you get resources (budget, headcount, time), the default is to use them.

“We have the budget, so let’s hire.”

“We have the time, so let’s build more features.”

“We have the people, so let’s add more projects.”

But resources are a trap.

More resources = more complexity = more coordination = slower decisions.

Constraints force clarity.

When you can only do three things, you pick the three most important things.

When you can do thirty things, you do thirty mediocre things.

The best projects I’ve worked on had too few people and too little time.

We had to be ruthless about scope. We had to make hard choices.

We built something focused and good.

The worst projects had plenty of everything.

We built a sprawling mess that nobody loved.

Boris’s insight: Treat constraints as features, not bugs.

If you don’t have enough people, good. Forces you to automate.

If you don’t have enough time, good. Forces you to ship simple.

If you don’t have enough budget, good. Forces you to be creative.

The question isn’t “how do I get more resources?”

The question is “how do I do more with what I have?”

That’s where the leverage is.

Outro

Three crossroads. Three counterintuitive choices.

Raw terminal over polished UI. Dumb search over smart database. Tiny team over big team.

Each one traded short-term convenience for long-term leverage.

Each one only works if you trust the model will get better.

And it will.

Your move: Find one place where you’ve over-optimized. Delete it. Replace it with the dumb, simple version.

Watch what happens.

Links: