Morning, CEO!

While normal people were making New Year’s resolutions they definitely won’t keep, the team at DeepSeek was busy rewriting the laws of physics for AI.

On New Year’s Eve, they dropped a paper that solves a problem so annoying it has plagued AI engineers for a decade. By fixing it, they didn’t just stabilize their models; they created a blueprint for scaling intelligence without losing your mind.

If you want to know how to handle massive growth without crashing—whether you’re a neural network or a human managing three freelance clients and a needy golden retriever—read on.

Here is how we steal their playbook.

1. The “Single-Lane” Problem

To understand what DeepSeek did, you have to look at how we usually build these things.

For the last decade, the industry standard for building AI has been the Residual Connection.

Think of it like a very reliable, strictly regulated corporate mailroom.

In the old days, messages (signals) would get lost or garbled as they moved up the chain of command. The Residual Connection fixed this with a “Safety Rule”: “You can process the mail, but you must strictly preserve the original package and pass it along with your changes.”

This ensures the signal never dies. It flows from Layer 1 to Layer 100 perfectly intact. It is safe. It is reliable. This is why tools like ChatGPT exist today.

But it has a bandwidth problem.

Because the standard Residual Connection treats the information flow like a single, protected stream, it limits how much information can flow at once. It’s like a polite, single-lane road. The cars (data) move smoothly, but you can only fit so many cars on the road before you hit a limit.

Engineers wanted more. They didn’t just want a safe road; they wanted a massive logistical network.

2. The “Toddler on Espresso” Trap

So, the engineers got ambitious. They thought, “Let’s turn this single lane into a twelve-lane superhighway!”

They called this Hyper-Connections (HC).

The idea was to widen the path so more information could flow at once. Total freedom! Maximum bandwidth! Everyone talking to everyone!

I tried this strategy in my 20s. I said “yes” to every project, every social event, and every hobby.

The result for me was a panic attack in a grocery store.

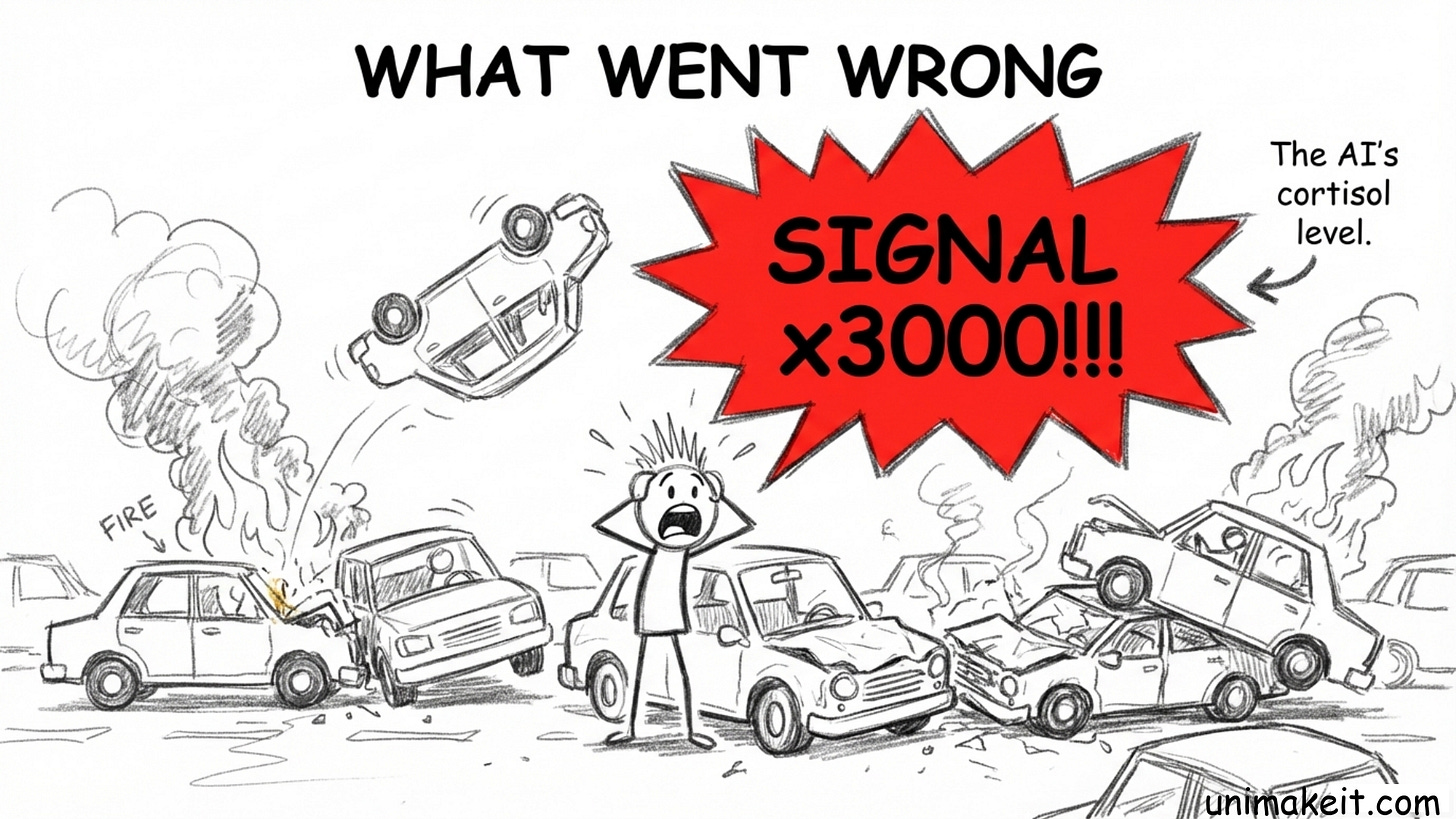

The result for DeepSeek? Their AI had a mathematical panic attack.

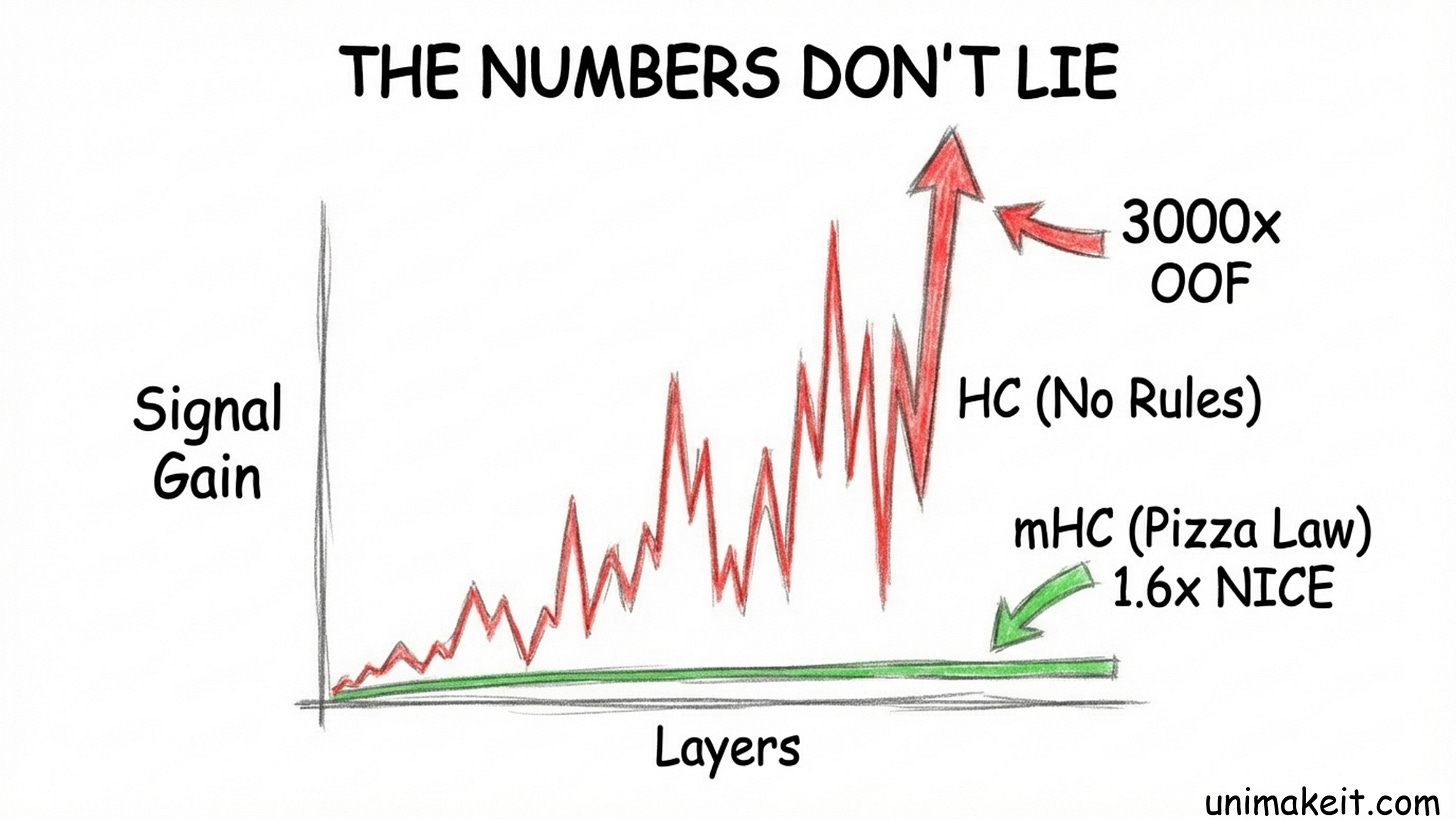

When they opened up these “Hyper-Connections” without any rules, the signal inside the model exploded. The amplification factor hit 3,000x.

Imagine whispering a secret to a friend, and by the time it reaches the last person in the line, they are screaming it through a megaphone while setting off fireworks.

The model became unstable. The “gradient norm” (basically the AI’s cortisol level) went haywire.

It turns out, unconstrained freedom isn’t genius. It’s just noise.

3. The Pizza Law of Sanity

DeepSeek realized they needed the best of both worlds: the massive bandwidth of the superhighway, but the safety and stability of the old single-lane road.

So, they dug up a piece of math from 1967 called the Sinkhorn-Knopp algorithm. They applied a new rule called Manifold-Constrained Hyper-Connections (mHC).

I know. “Manifold-Constrained” sounds like something that happens to a spaceship in Star Trek.

But the concept is actually hilarious. I call it the Pizza Law.

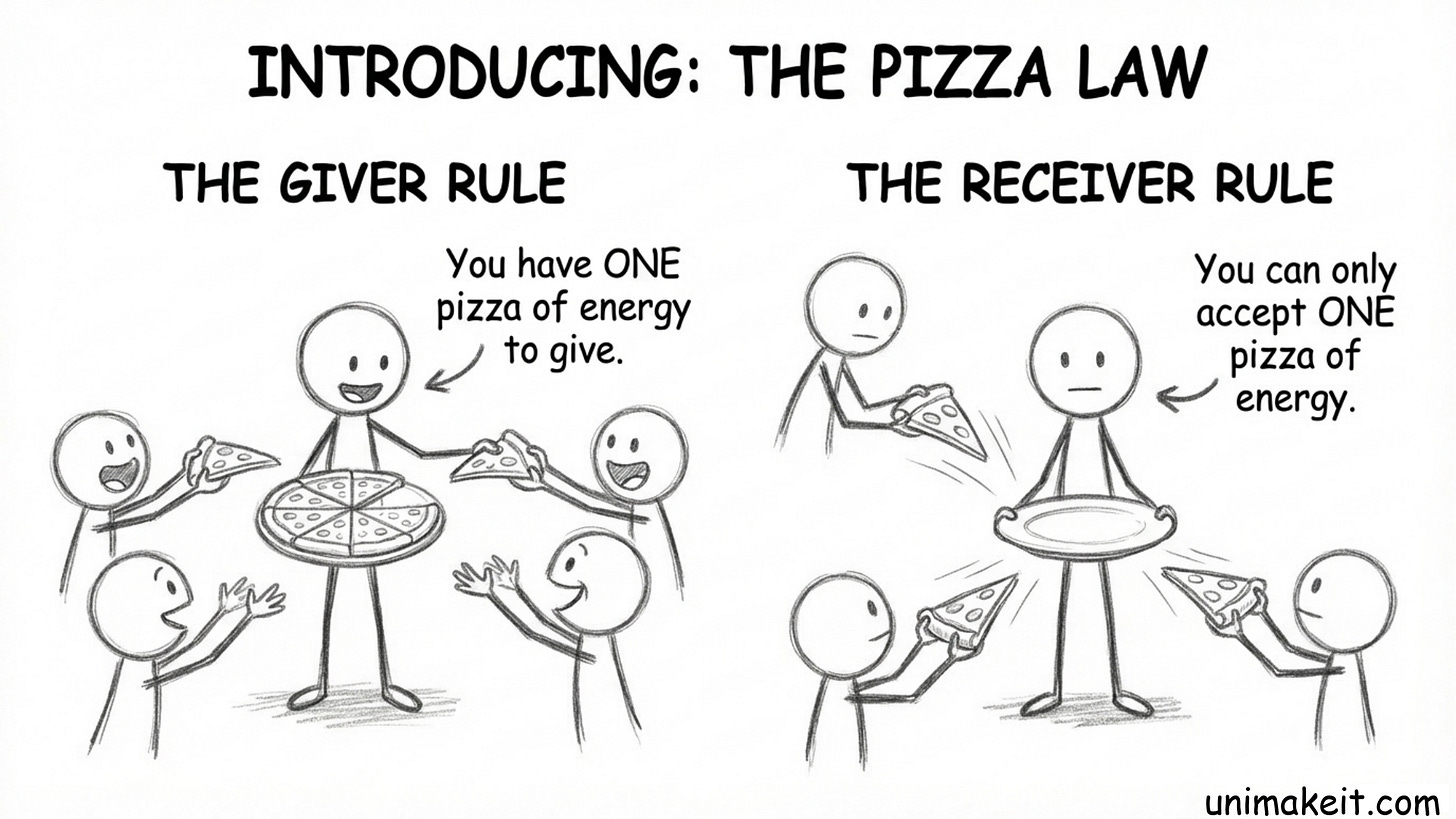

They forced the model’s wide connections to behave like Doubly Stochastic Matrices. Here is what that means in plain English:

The Giver Rule: You (a neuron/channel) have exactly one pizza of energy to give. You can give a slice to Channel A and a slice to Channel B. But you cannot give everyone a whole pizza. Your total output must equal 100%.

The Receiver Rule: You can only eat exactly one pizza. If 50 people try to feed you, you have to shrink their slices down until they all fit on one plate. Your total input must equal 100%.

That’s it.

By forcing every connection to follow the Pizza Law, they created a closed loop of energy conservation.

The result? That 3,000x signal explosion dropped to a polite 1.6x. The model stopped screaming and started thinking. It retained the capacity of the superhighway but regained the stability of the single lane.

The Takeaway

We usually assume that Scale = More Freedom.

“If I widen the road, I can drive however I want.”

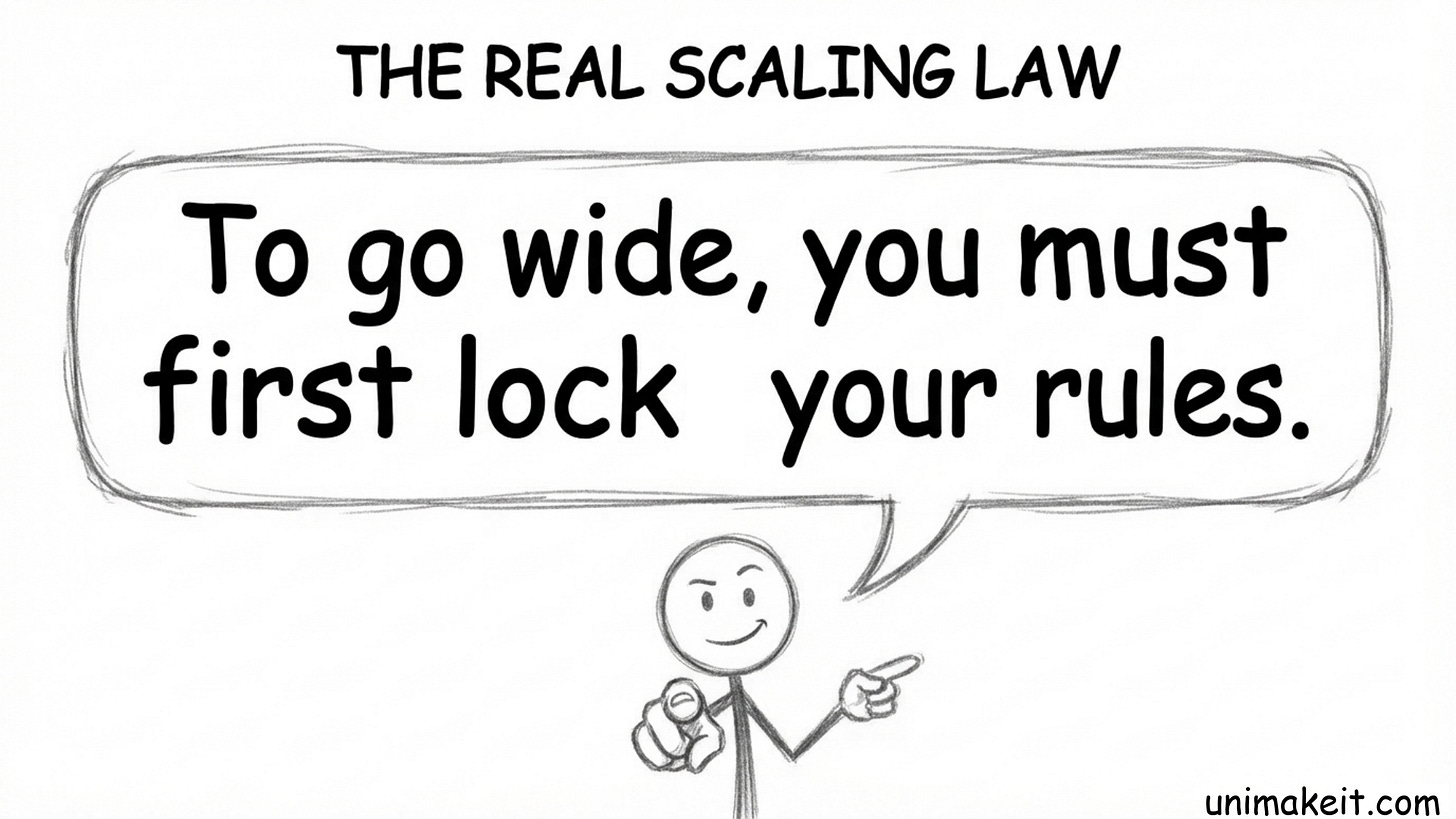

DeepSeek proves the counter-intuitive truth: Scale requires stricter Constraints.

They successfully built a massive “12-lane superhighway,” but it only worked because they mathematically forced every car to stay in its lane (the Pizza Law). Without this constraint, the signal didn’t just grow; it exploded into noise (3,000x amplification).

They paid a tiny 6.7% “stability tax” in speed to unlock scalability.

This is the ultimate lesson for any complex system, whether it’s a neural network or your career:

Unconstrained freedom is just chaos waiting to happen.

To go wide, you must first lock down your rules.

Go define your Pizza Law.

Links:

https://www.arxiv.org/abs/2512.24880