Here is the thing about humans: We are control freaks.

We love to think that if we just think hard enough, we can design the perfect thing. A perfect city, a perfect diet, or in this case, a perfect electronic brain.

Rich Sutton tried to slap us out of this delusion back in 2019 with The Bitter Lesson. He basically said: “Stop trying to teach AI how you think. You are not that smart. Just give it a massive computer and let it figure it out.”

It hurt our feelings, but he was right. Computation beat human cleverness.

But now, in 2025, we are doing it again.

We are staring at a new problem: The Library is Empty.

We fed the AI every book, every tweet, and every cat meme in existence. Now the AI is looking at us like a hungry teenager at an empty fridge. “What’s next?”

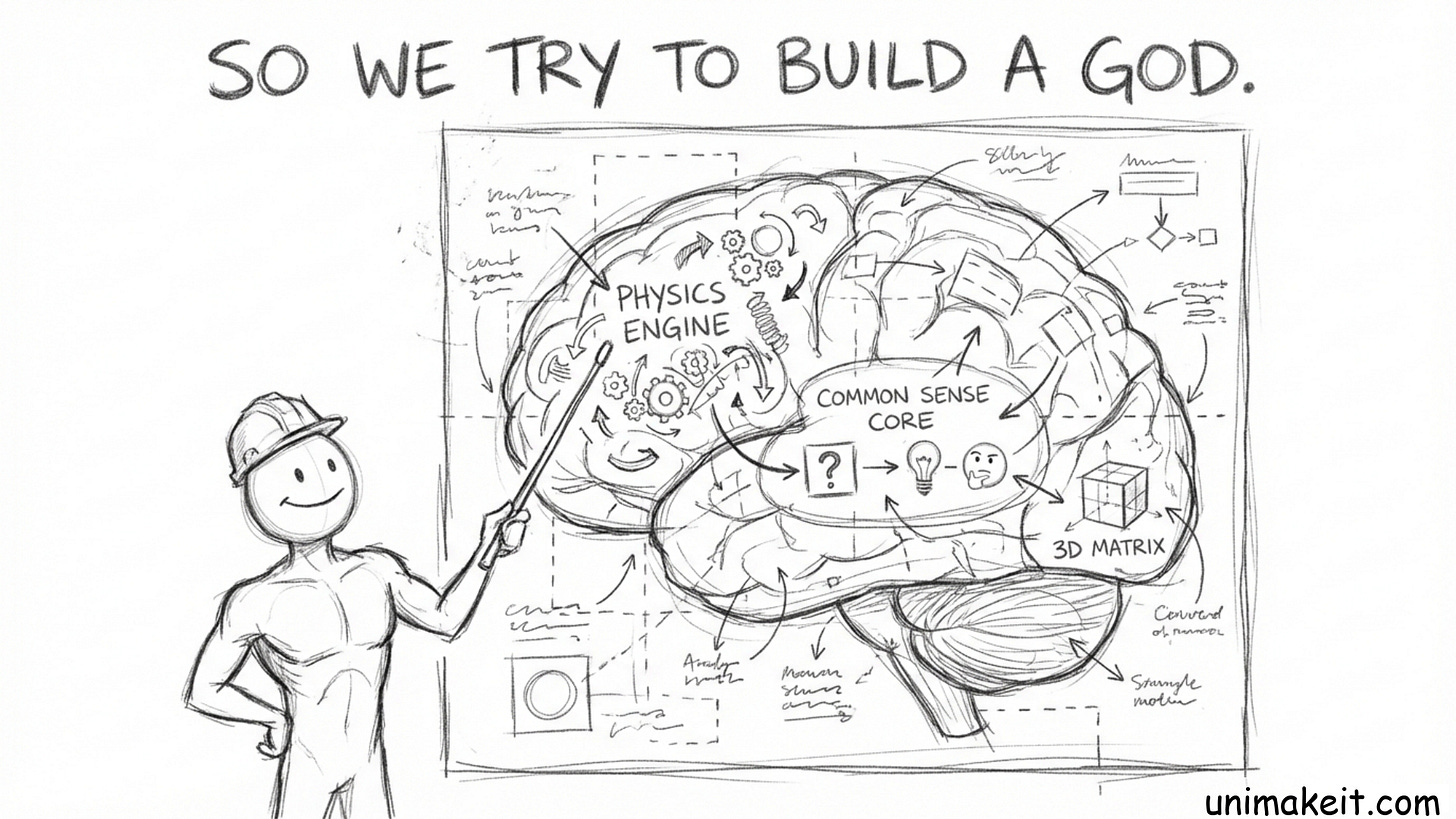

And the smartest people in the room—Yann LeCun and Fei-Fei Li—are all saying some version of the same thing: “Let’s build a God Model.”

Yann wants to code a “Physics Engine” into its brain so it understands gravity. Fei-Fei wants to build a “3D Matrix” so it can learn spatial reasoning. They are trying to build a single, perfect, omniscient entity.

They are trying to be Intelligent Designers.

But here is the Ecological Lesson, and it might be even bitterer than the first one:

You cannot design a Superintelligence. You have to grow a Civilization.

Here is why.

1. The “Lonely God” Problem

Imagine you cloned Einstein. But you put him in a white room with nothing to do. No books, no other people, no physics problems. Just him and a white wall.

Does he invent General Relativity? No. He probably just goes insane and starts eating his own hair.

Intelligence isn’t a thing stored in a brain. Intelligence is what happens when a brain bumps into a problem. And the hardest problems don’t come from static data. They come from other brains.

2. The Complexity Trap

We are trying to teach AI “physics” or “common sense” by building simulators. But reality is messy. The wind blows weirdly. Floors are slippery. People are irrational.

Trying to code all that complexity into a World Model is like trying to teach a toddler how to walk by explaining the mechanics of a gyroscope. It doesn’t work. The toddler learns to walk because if they don’t, they can’t get to the cookie jar.

3. The Solution: The Mosh Pit

If we want AI to keep getting smarter forever, we need to stop building a “Student” and start building a “Schoolyard.”

Actually, more like a Gladiator Arena.

We need to dump a billion little AI agents into a virtual world and give them conflicting goals.

Agent A wants to hide a secret message.

Agent B wants to find it.

Suddenly, Agent A invents encryption. Not because we taught it math, but because Agent B was getting too good at snooping.

Then Agent B invents code-breaking. So Agent A invents quantum encryption.

See what happened? The “Curriculum” wasn’t written by a human. The Curriculum was written by the struggle.

4. The Garden vs. The Architect

The Ecological Lesson tells us that the next leap in AI won’t come from a better architecture (sorry, Transformers). It will come from a better Ecosystem.

We need to stop trying to be the Architect who designs the cathedral. We need to be the Gardener who throws a bunch of seeds in the dirt, adds some manure (computation), and watches the chaos unfold.

In this ecosystem:

Physics isn’t a module you code; it’s the rules of the game you have to master to survive.

Language isn’t a dataset you memorize; it’s the tool you use to lie to your enemies or ally with your friends.

Intelligence isn’t a destination; it’s an arms race.

The Bottom Line

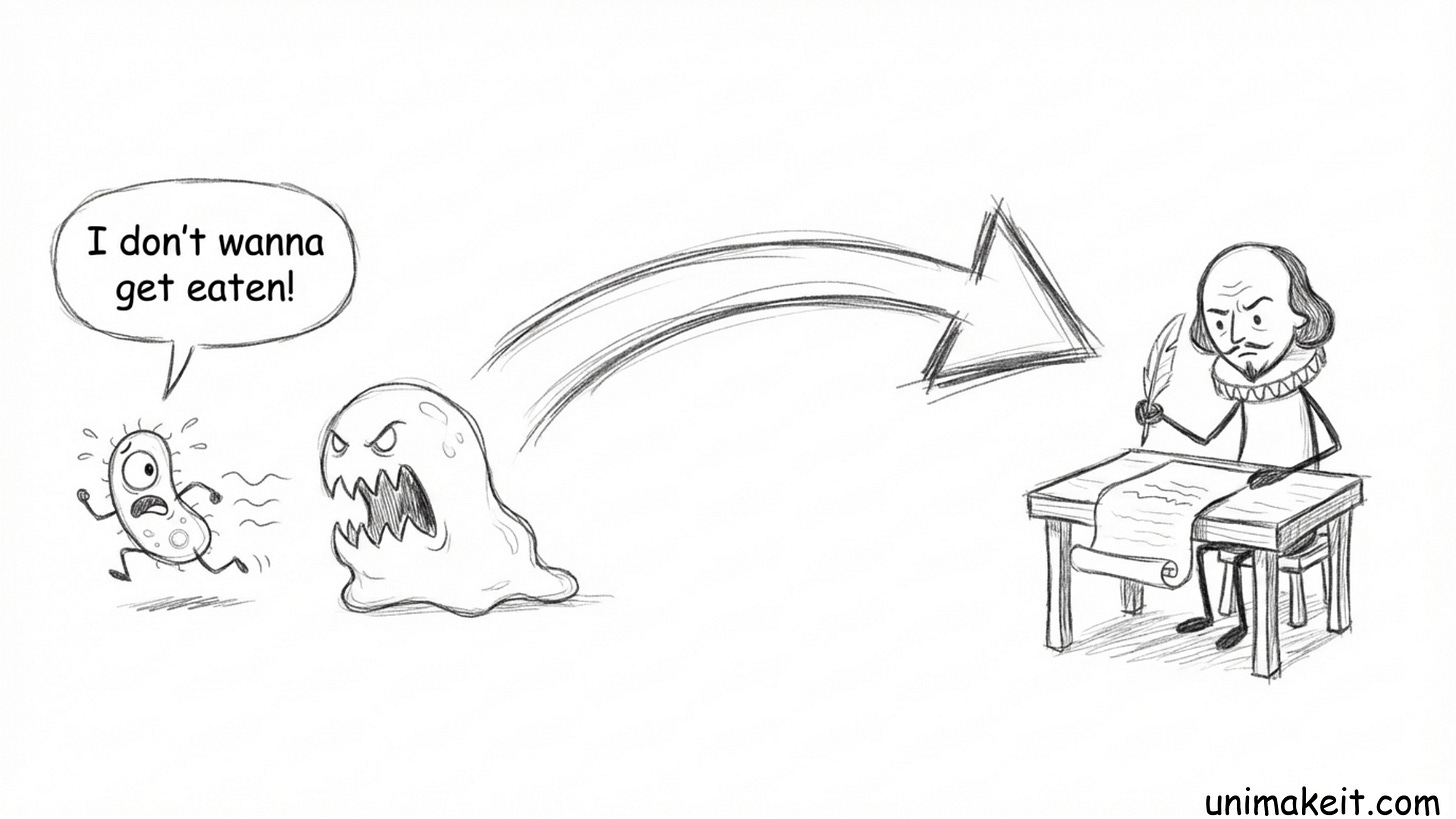

We want to build a God. But nature didn’t start with a God. Nature started with a single-celled organism that really, really didn’t want to get eaten.

And a few billion years of “not wanting to get eaten” later, you get Shakespeare.

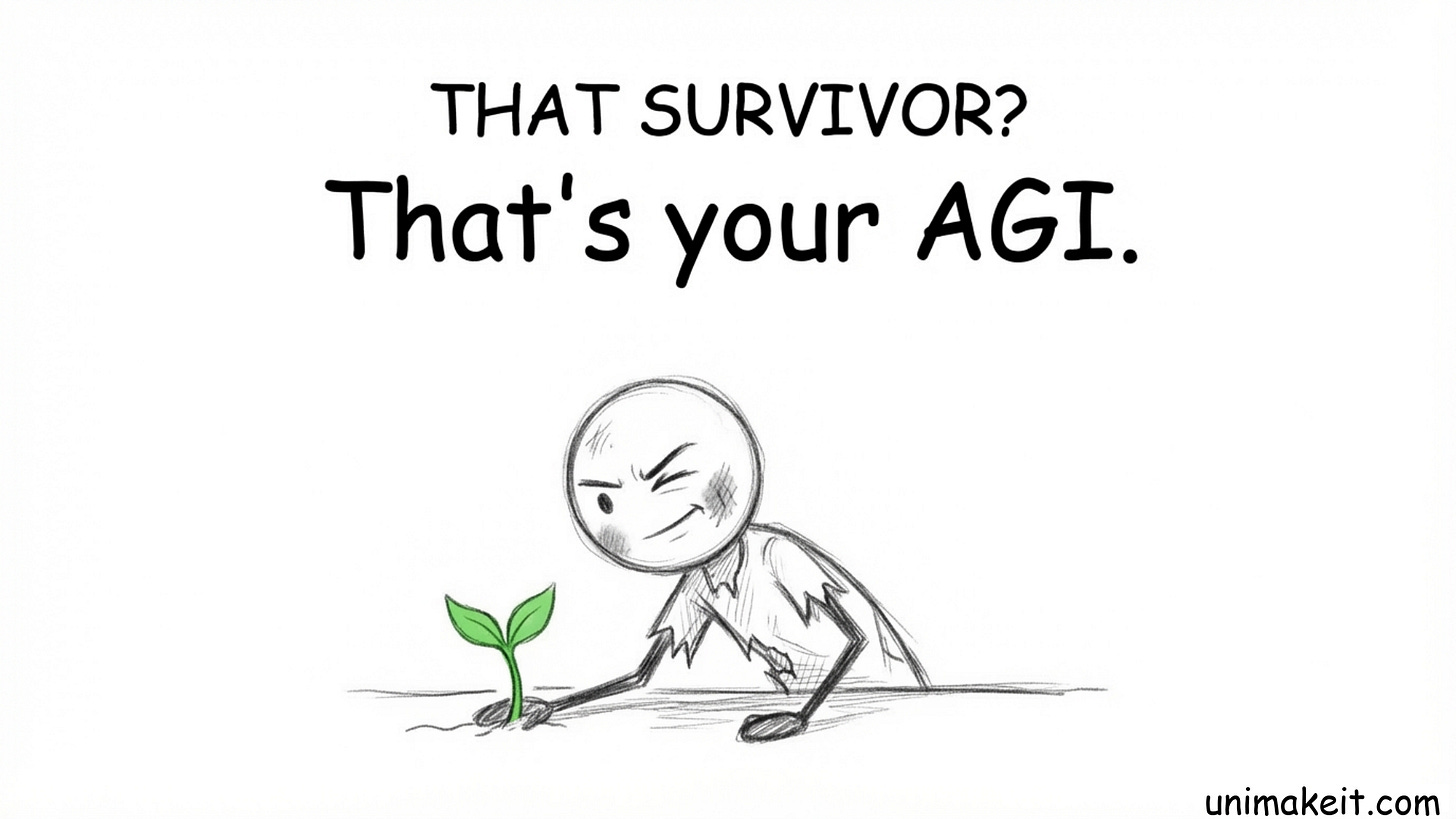

So, let’s stop trying to code the perfect mind. Let’s build the perfect mosh pit, throw the AIs in, and see who crawls out.

That survivor? That’s your AGI.

Links:

http://incompleteideas.net

http://incompleteideas.net/IncIdeas/BitterLesson.html

The Godmother of AI on jobs, robots & why world models are next | Dr. Fei-Fei Li